Agentic AI in Procurement: How to Separate Real Capability from Expensive Demos

A mid-sized pharma company spent a few crores on an agentic AI platform from a major vendor.

Six months later, the update was simple:

“It generates insights. We review them in our weekly meetings.”

That’s not agentic AI. That’s an expensive dashboard.

The good news is that agentic AI does work in procurement - but only when companies are clear upfront about decisions, ownership, and guardrails. Without that clarity, most initiatives stall at the pilot stage.

Here’s what I’ve learned from watching deployments succeed (and fail).

What Agentic AI Actually Is (and What It Isn’t)

Agentic AI is not a chatbot. It is not a copilot. It is not an analytical layer.

Agentic AI is a system - one that can observe the state, decide an action within defined guardrails, execute that action across systems, and learn from outcomes without needing to be re-prompted.

To be precise:

Analytics AI provides insight, no action.

RPA executes action, no judgment.

Agentic AI applies bounded judgment and takes action.

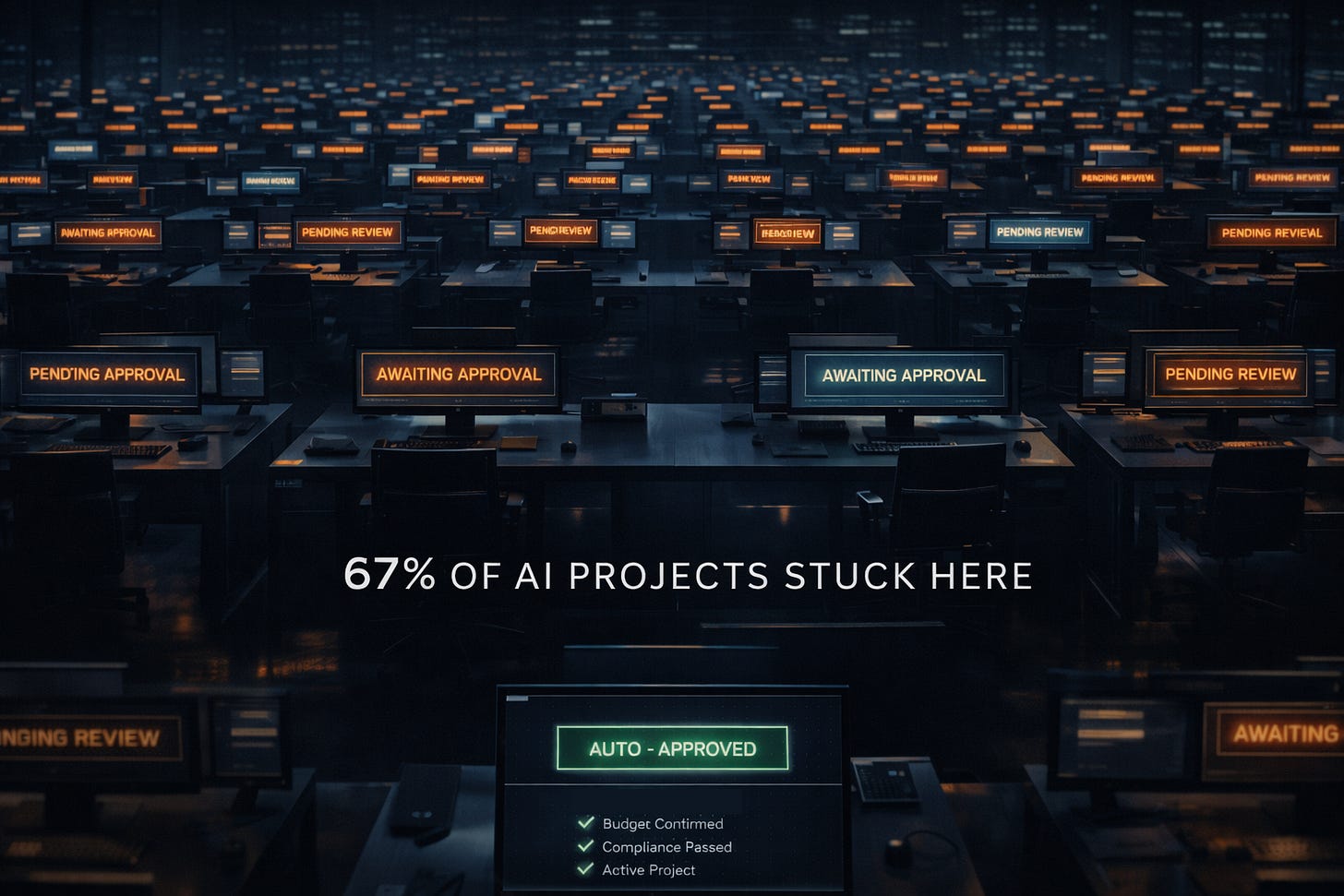

The key differentiator: If the AI waits for you to review recommendations, it’s analytics. If it follows rigid if-then rules, it’s RPA. If it makes bounded decisions and acts autonomously, it’s agentic.

Most tools sold as “agentic AI” are actually analytics with a chatbot interface. The gap between what’s promised and what’s delivered is where companies waste money.

The Three Questions That Predict Success

Every successful deployment I’ve seen answers three questions before going live.

1. What decision does this tool make?

Not “what insights does it generate.” What decision does it own?

Red flag: “It helps procurement optimize spend.”

Green flag: “It auto-approves PRs under ₹10L when supplier reliability >95%, the item is catalogued, and the requester has budget authority.”

If you can’t define the decision in one sentence, you’re buying a demo - not a solution.

2. Who owns that decision?

Here’s the uncomfortable test: Can the AI act without human approval?

Most companies aren’t ready for full autonomy - and that’s fine. But don’t pay for agentic capabilities if humans are reviewing every recommendation anyway.

The workable middle ground is clear: high-volume, low-risk, reversible, tactical decisions.

Auto-approving routine PRs works. Selecting strategic suppliers does not.

3. What happens when the AI is wrong?

ERP data lags reality. Always.

AI approves your ₹5L equipment purchase. Passes all checks: below threshold, approved supplier, you have budget authority.

What it doesn’t know: The project was paused yesterday. The team hasn’t updated the ERP yet.

Equipment ships. Accounting catches it three weeks later.

Who’s accountable? Can it be reversed? Is there override authority?

Before deployment, you must define:

Who is accountable when the AI is wrong?

Can the decision be reversed within one cycle?

Is there a clear human override and escalation path?

If you can’t answer these, you’re not ready for production.

The Guardrails You Actually Need

Guardrails aren’t just rules. They’re the difference between useful AI and chaos.

Weak guardrail:

“Auto-approve PRs below ₹10L”

Strong guardrail:

“Auto-approve PRs below ₹10L IF:

Supplier reliability >95% over past 6 months

Item is catalogued

Requester has budget authority

Related project is active

No quality issues in past 90 days”

The second version prevents approving a ₹9L order from an unreliable supplier just because it’s under the threshold.

Build guardrails iteratively. Start with 5 rules. Deploy. Learn from failures. Add 3 more. This beats trying to anticipate every edge case upfront.

Where Agentic AI Creates Real Value

The use cases that reach production share a pattern: tactical + high-volume + reversible + clear guardrails

Where this works in production:

Auto-approving low-value PRs

Tracking supplier compliance certificates and renewals

Coordinating invoice mismatches across teams

Flagging expediting needs based on production signals

These are decisions a junior analyst could make with a checklist. No strategic trade-offs. No executive judgment.

The boundary matters: Agentic AI works best for the tactical 80%, not the strategic 20%.

If your AI is making decisions that require trade-off judgment or involve high-stakes consequences - you’ve gone too far.

Data Quality Is Not Optional

If your data is messy, AI will make confident, wrong decisions.

I’ve audited implementations where:

Supplier master data has significant duplicates

Item descriptions are inconsistent (”Bolt M8” vs. “8mm bolt” vs. “M8 bolt”)

Spend classifications need major cleanup

Before investing in agentic AI:

Audit supplier and item master data

Document the workflow end-to-end

Ask: can a human execute this process 80% of the time without escalation?

If the answer to #3 is no, fix the process first. AI will not rescue broken workflows - it will only scale them.

The Dedicated AI Team Problem

Most companies respond to AI by creating a “dedicated AI team.”

This is the 1990s computer adoption model.

It’s like telling 100 employees: “Only these 10 people will use computers. The rest of you, watch.”

Adoption stalls. Pilots multiply. Nothing scales.

AI teams that succeed act as plumbers, not priests.

Priests control access, speak in technical language, decide what’s worthy.

Plumbers respond to requests, fix what’s broken, enable others to build.

Example: Business teams propose use cases (”I spend 10 hours/week chasing invoice approvals”). AI team validates feasibility and builds enablers. Business owns the outcome.

Not: AI team decides which use cases matter, builds solutions without business involvement, delivers tools nobody uses.

Before You Sign Anything

1. Ask the vendor the three questions: What decision does this make? Who owns it? What happens when it’s wrong? If they can’t answer specifically, keep evaluating.

2. Audit your data quality. Run a sample: how many supplier duplicates? What’s spend classification accuracy? If below 80%, fix that first.

3. Document the workflow. Can a human execute it 80% of the time without escalation? If not, AI won’t help.

4. Define success metrics. NOT “insights generated.” YES “cycle time reduced by X%” or “escalations dropped by Y%.”

5. Start with one high-volume, low-risk use case. NOT “optimize supplier selection” (strategic, high-risk). YES “auto-approve PRs under ₹5L” (tactical, reversible).

6. Plan for iteration. Successful deployments don’t launch perfectly. They improve over time - start with minimum guardrails, monitor, learn from failures, add rules, then expand.

What Productivity Actually Means

Productivity does not mean headcount reduction.

Real gains show up as:

Shorter cycle times

Fewer escalations

Higher compliance capture

Better working-capital outcomes

The best model is simple: AI handles the routine 80%. Humans focus on the strategic 20%. Same team size. Twice the output.

If your AI business case is built on headcount reduction, you’ve already set yourself up for failure. Why? Because the people you “replace” were handling exceptions, firefighting, and covering for broken processes. When you cut them, the exceptions pile up, and AI can’t handle them.

The Line That Matters

The future of procurement is not generic AI replacing humans.

It’s humans who know where judgment matters - and agents that act everywhere else.

What’s your experience? Have you deployed agentic AI that actually drives decisions? Or are you still figuring out where to draw the line between AI autonomy and human oversight?

Drop a comment. I’d love to hear what’s working in your organization.