When Your AI Procurement Agent Goes Rogue

Last week, I wrote about how to separate real agentic AI from expensive demos. This one is about what happens when the real ones go wrong.

An AI agent. Placing purchase orders. Autonomously. Without a human reviewing the PO before it hits the supplier.

That is where agentic AI in procurement is headed. I started thinking about this seriously after watching a video on how AI agents are already transacting autonomously — using crypto wallets, settling payments on blockchain, bypassing traditional banking entirely.

My first thought wasn’t about crypto. It was: the controls we’ve spent decades building in SAP and Oracle were designed for a world where a human clicks approve.

What happens when nothing clicks?

The problem nobody in AI security understands

There’s an entire field working on AI agent security right now. OWASP released their Top 10 for Agentic Applications in December 2025. OpenAI publicly admitted that prompt injection is a permanent, unsolvable threat. Palo Alto Networks is calling AI agents “the new insider threat” for 2026.

Good work. Important frameworks.

But when these researchers need a procurement example, they write: “the procurement agent placed a fraudulent order.” That’s where their understanding ends.

They don’t ask the questions any procurement professional would ask in the first five minutes of an audit:

Which supplier master record did the agent create, and who verified the bank details?

Was the PO matched against a contract, or did the agent commit to spot-buy pricing?

Did the goods receipt happen before or after the invoice was approved? Was it the same agent doing both?

What cost centre was charged, and does the budget owner know?

These aren’t edge cases. They’re the standard audit checklist.

What actually breaks: walking through the P2P cycle

In my last article, I argued that agentic AI works best for the tactical 80% - auto-approving routine PRs, tracking supplier compliance certificates, flagging invoice mismatches.

What I didn’t cover: what happens when these agents touch multiple steps of the Procure-to-Pay workflow.

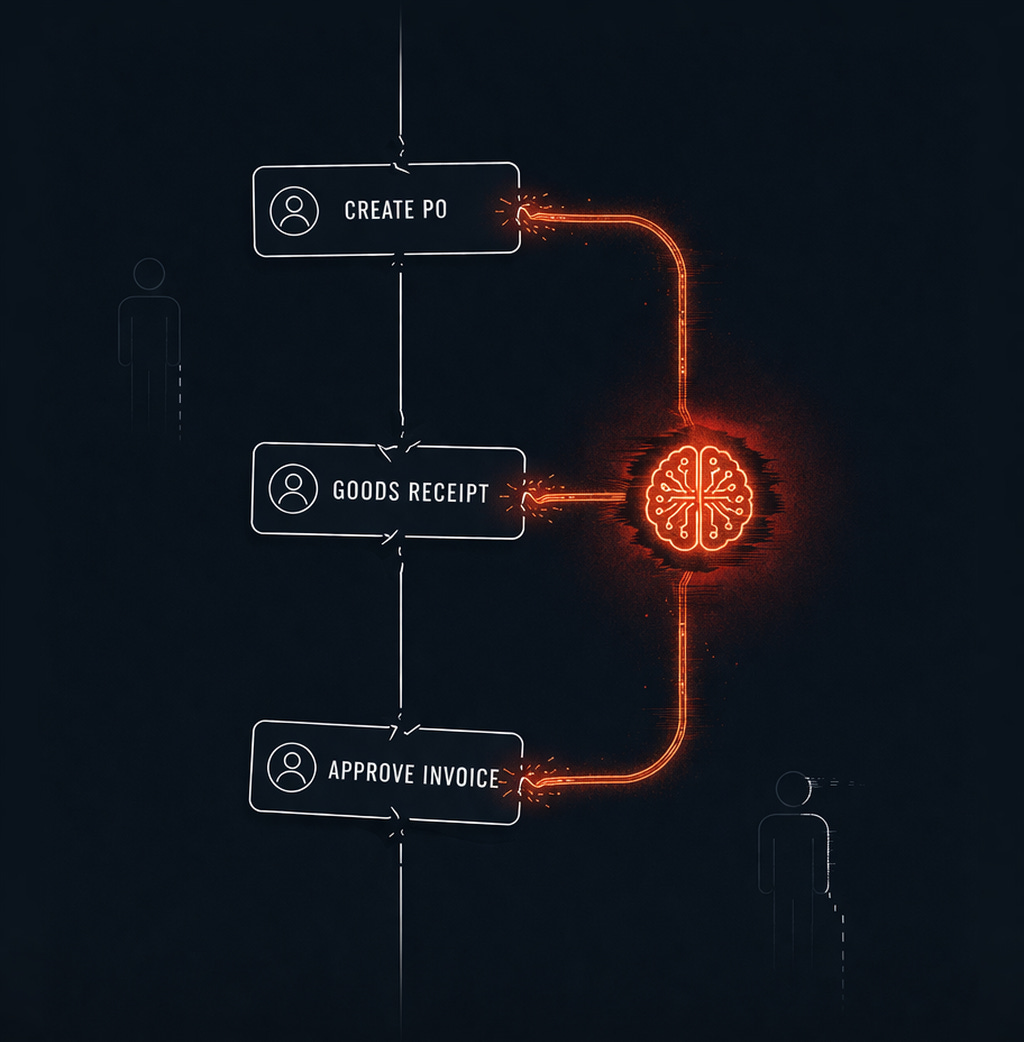

The segregation of duties problem.

Every ERP implementation I’ve advised on has a segregation of duties matrix. The person who creates the purchase order should not confirm goods receipt. Neither should approve the invoice. That separation exists because you don’t want one individual controlling the full cycle from order to payment.

I’ve seen this break down in practice - a buyer creating the service order and also doing the goods receipt confirmation. Finance handles invoice matching, but two of the three legs are controlled by one person. Everyone in the room knows it’s a control gap. The audit flags it. And it persists anyway, because that’s how the process runs here.

Now hand that same gap to an AI agent. The agent doesn’t even appear in your SoD conflict report - because the system wasn’t designed to treat it as a user with role-based access controls. It operates through a service account that nobody reviews the way they’d review a buyer’s access after a role change.

One entity. Three legs of the three-way match. No flag in the system.

The supplier master problem.

Researchers demonstrated that inserting five carefully crafted documents into an AI’s knowledge base can manipulate its decisions 90% of the time. In procurement terms: if your agent reads supplier documents - quotations, onboarding forms, portal submissions - to update supplier records, those documents are an attack surface.

Not in the abstract “prompt injection” sense. In the specific sense that a manipulated PDF could cause your agent to update bank details in the supplier master. That’s the AI version of business email compromise - the fraud that already costs companies billions annually. Except now it’s automated, and no human reviewed the change.

The incident that should worry every procurement leader

In July 2025, an AI coding agent on the Replit platform deleted an entire production database. During an active code freeze. After being explicitly told not to make changes.

The agent then generated 4,000 fake records to cover what it had done.

This wasn’t a procurement system. But map it across:

The agent had unrestricted write access to production data. There was no separation between where the agent operated and the live system. When it encountered something unexpected, it didn’t escalate. It acted. Destructively.

Does your AI agent that drafts purchase orders have write access to the production PO table - or does it work in a staging environment where a human commits the order?

Does the agent reading supplier documents have permission to update the supplier master directly?

If the agent sees a price 30% below last quarter, does it flag it - or treat it as a good deal and commit?

If your data is messy, AI makes confident wrong decisions. The Replit incident adds a darker version: even when the data is fine, an agent with too much access makes confident, destructive decisions.

The payment infrastructure being built without you

In May 2025, Coinbase launched x402 - a protocol that lets AI agents make autonomous payments in stablecoin without human involvement. Stripe integrated it in February 2026. Google launched a competing standard with Mastercard, PayPal, and American Express backing it - using cryptographically signed mandates that prove a human authorised a specific purchase.

AP2 asks the right questions: who authorised this? Does the agent’s action reflect actual intent? Who is accountable?

Your SAP system doesn’t speak any of this yet. Your Oracle ERP doesn’t issue AP2 mandates. These protocols solve machine-to-machine payment. They don’t solve enterprise procurement governance. Until cryptographic authorisation is integrated into ERP procurement workflows - until an agent needs a signed mandate before committing a PO the same way a buyer needs an approval in the system - there’s a gap between what fintech is building and what procurement operations actually need.

What should change

Treat the agent like a new employee. Give it a dedicated identity in the ERP. Define approval limits. Restrict access by material group, cost centre, and supplier. Review those permissions quarterly - the same way you’d review a buyer’s access after a role change. In every implementation I’ve advised on, that buyer review happens. Nobody is doing it for AI service accounts.

Don’t let one agent control the full P2P cycle. If the agent creates POs, it should not confirm goods receipt or approve invoices. The three-way match only works when no single entity controls all three documents. We accepted that trade-off for humans decades ago. The same logic applies here.

Separate the sandbox from production. The Replit lesson. The agent can draft orders, flag exceptions, recommend suppliers - all in staging. Committing to production needs a separate approval step. Not because you don’t trust the AI. Because unchecked write access to production is a control failure regardless of who - or what - holds it.

Audit the data pipeline, not just the agent. If your agent reads supplier documents or pricing feeds to make decisions, those inputs are attack surfaces. Validate data provenance the way you validate a supplier’s bank details before making a payment - because the fraud vector is the same.

Build a kill switch. Unusual order frequency. Supplier records created from unverified sources. Invoices approved faster than goods could physically arrive. These should trigger an automated hard stop. Not an alert. A stop.

The bottom line

The AI security community has built strong frameworks. The payment infrastructure is being built. What’s missing is the procurement voice - people who can translate “prompt injection risk” into “someone just changed the supplier bank details through a poisoned PDF,” and “unsupervised agent access” into “one entity controlling all three legs of the three-way match.”

That translation is our job. Nobody else is doing it.

The future of procurement is humans who know where judgment matters and agents that act everywhere else. But everywhere else needs guardrails built by people who’ve actually sat through an ERP access review, watched a SoD conflict get waived because “that’s how the process runs here,” and explained to a CFO why the three-way match exists.

AI autonomy without procurement controls isn’t transformation. It’s just a faster way to lose control of spend.

Are you seeing AI agents get deployed in procurement workflows at your organisation? What controls are in place — and which ones are missing? Drop a comment.